Active projects

| EPSRC overseas travel grant: Advancing Cognitive Architectures For Robots Via UK–Italy Collaboration Using Abel. |

|---|

Introducing our robots

Cognitive Robotics and Autonomous Systems (CoRAS) Lab

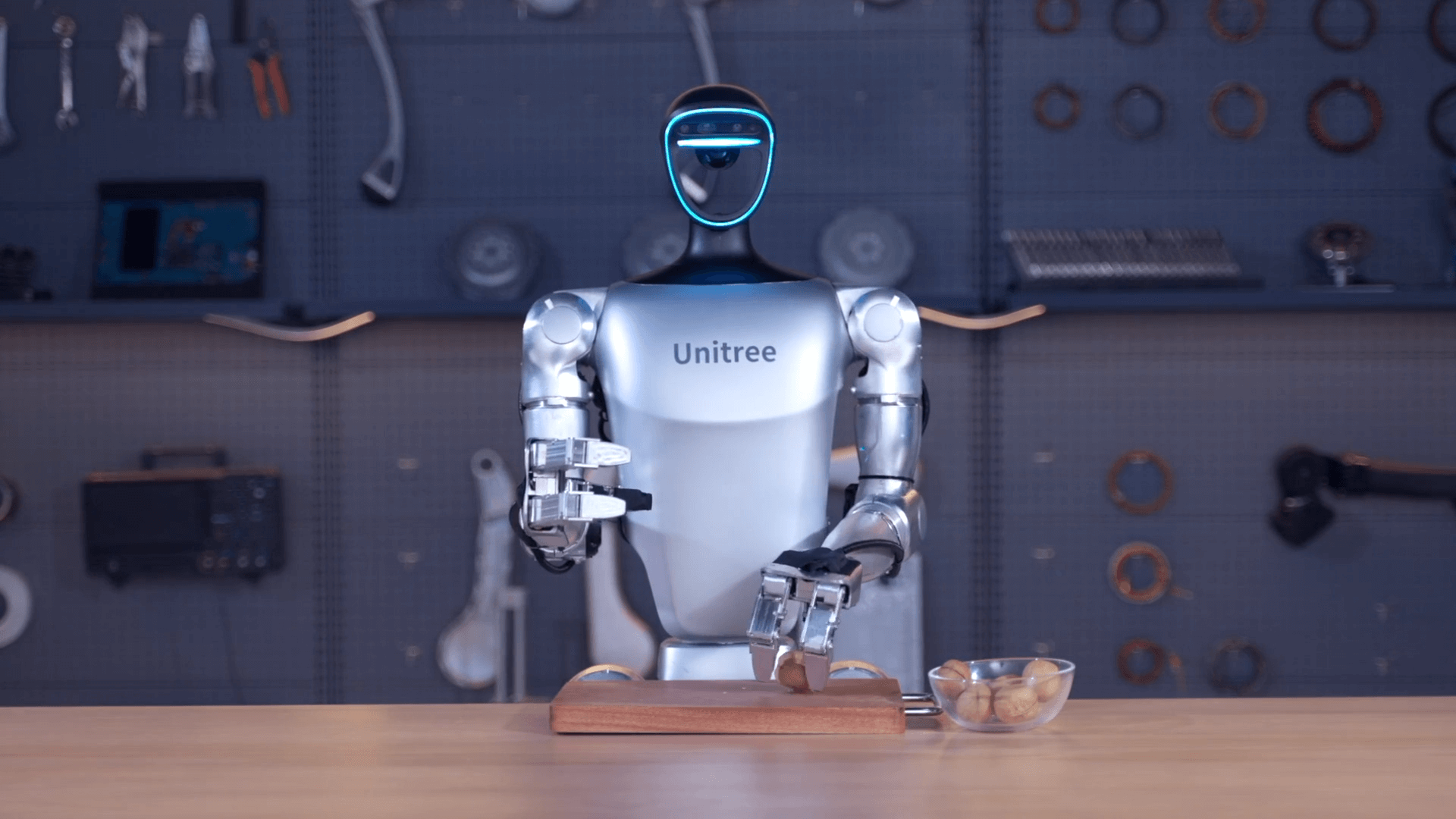

The Unitree G1 humanoid robot is a compact, AI-driven humanoid platform designed for dynamic movement and precise manipulation, equipped with 43 joints for human-like mobility. It integrates learning-based control (imitation and reinforcement learning) with advanced perception through depth cameras and LiDAR for autonomous operation. The robot also features dexterous three-finger hands with force-controlled manipulation.

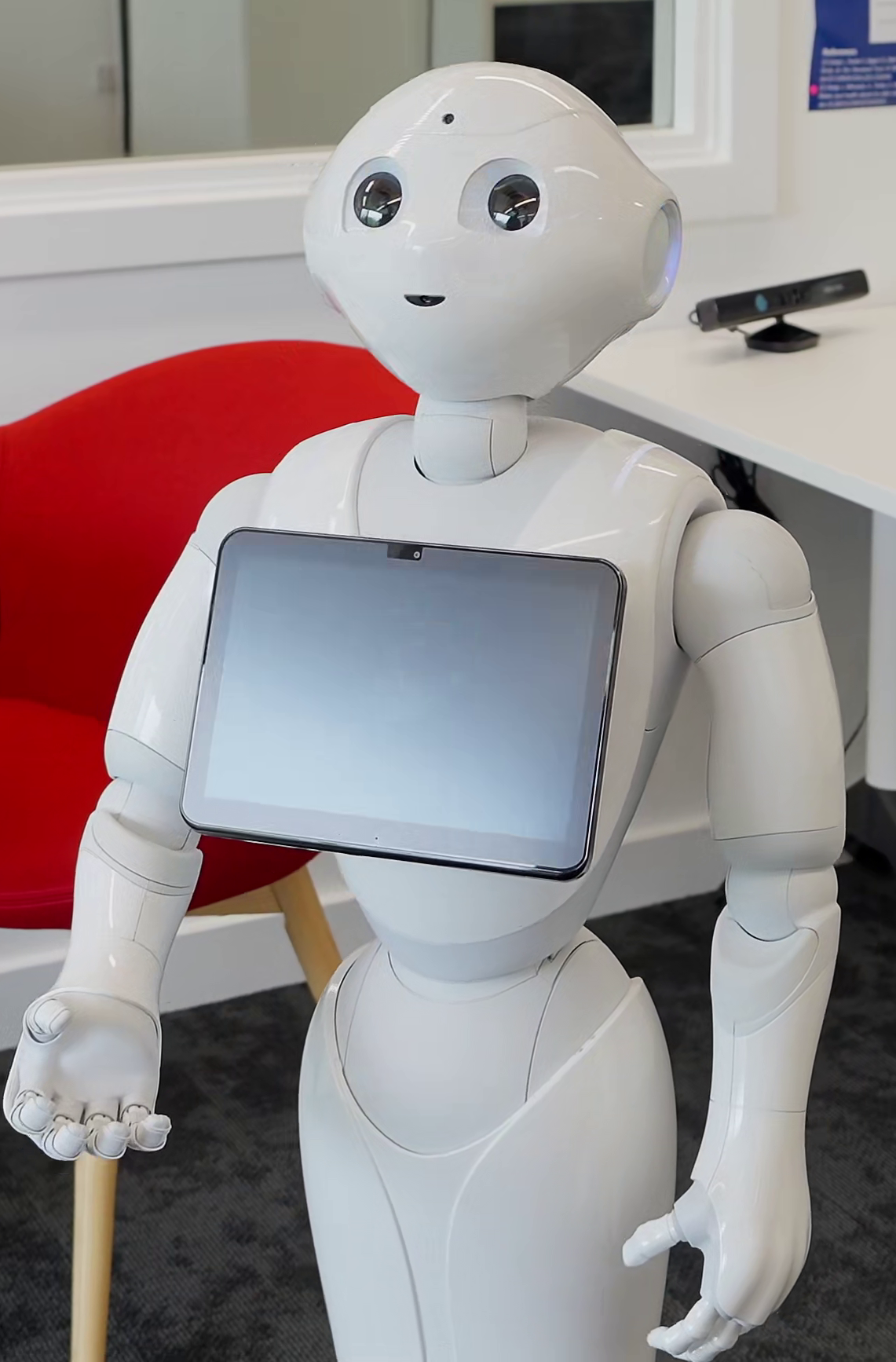

Pepper is a pioneering social humanoid robot developed to facilitate human-robot interaction. Pepper integrates advanced technologies for people tracking and emotion recognition. It is a versatile platform for research and applications in social robotics.

Nao is a small humanoid robot used in research, education, and social interaction studies. It is equipped with sensors, cameras, and microphones to perceive its environment. Nao communicates through speech, gestures, and programmable actions. Its design makes it useful for studying robotics in learning and autonomous behaviour.

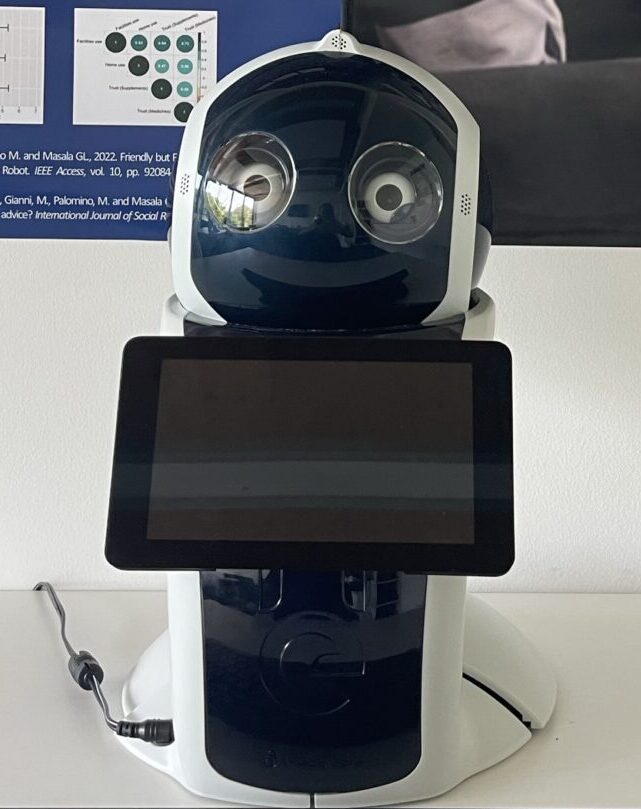

Amy A1 is an android-based service robot designed for interactive and assistive applications. It features advanced speech recognition, autonomous navigation, and multi-mode information display.

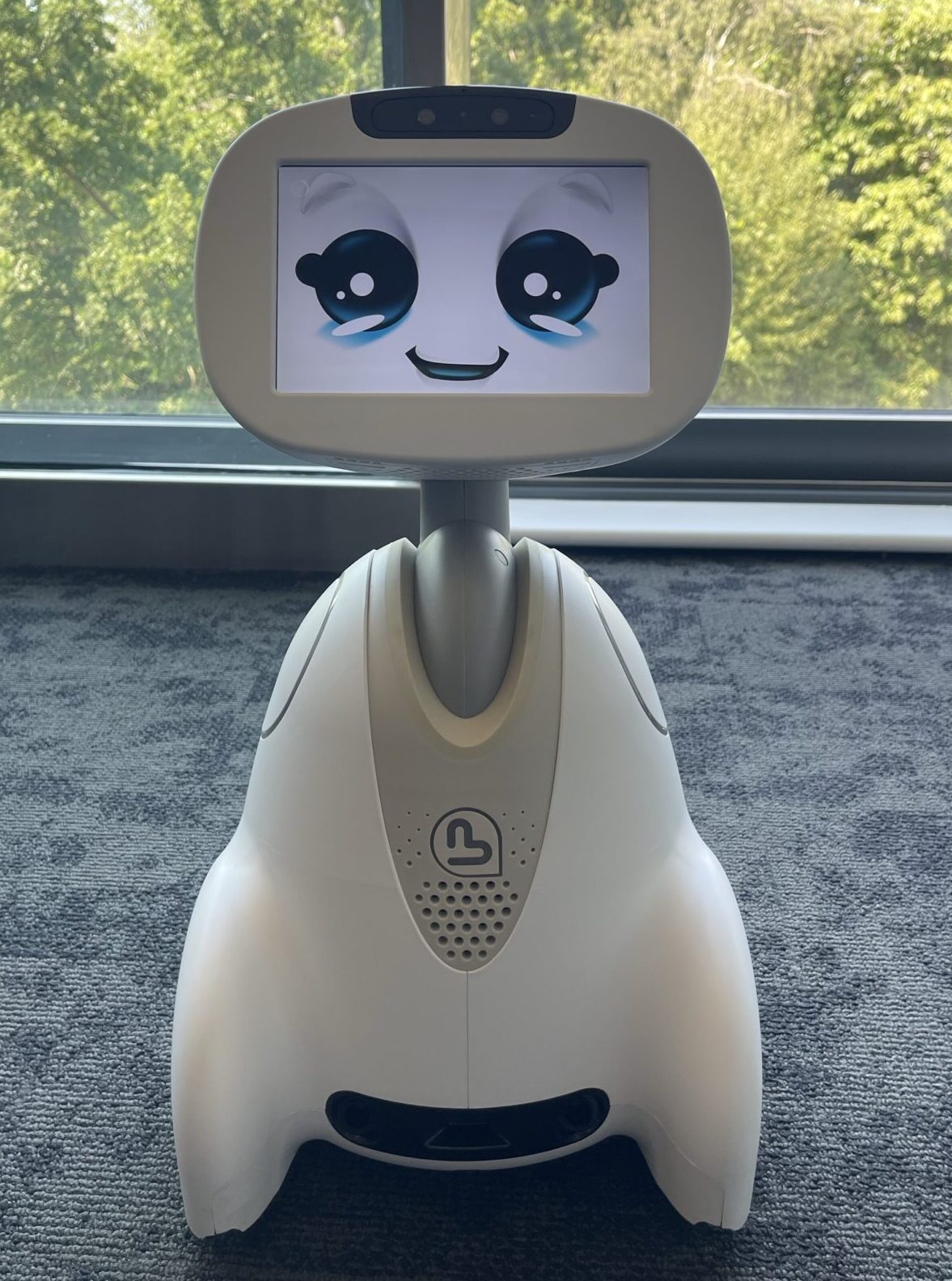

Buddy is an android-based social robot designed to foster connections with its expressive face and ability to display a range of emotions, creating natural and engaging interactions. It serves as a versatile assistant for education, entertainment, and human-robot interaction.

Q.bo One is an open-source robot built on Raspberry Pi and Arduino. It features stereoscopic vision, omnidirectional voice interaction, LED-based speech synchronisation, and touch sensors. It supports both beginner-friendly programming with Scratch and customisation for advanced projects using Python programming.

The Unitree Go2 robot dog is an agile, AI-powered quadruped robot designed for dynamic locomotion and intelligent interaction, weighing around 15 kg and capable of running at speeds of up to 5 m/s. It uses advanced 4D LiDAR and onboard AI to perceive its environment, enabling autonomous navigation, obstacle avoidance, and terrain adaptation, using reinforcement learning to perform complex movements, such as climbing, jumping and stable traversal.

The AgileX Cobot S Kit is a ROS-based mobile manipulation robot that integrates an omnidirectional mobile base, a 6-axis robotic arm with gripper, and a multi-sensor perception system into a single research platform. Designed for embodied AI and robotics experimentation, it supports autonomous navigation, real-time 3D mapping, and object manipulation in dynamic environments, powered by onboard computing for AI workloads.

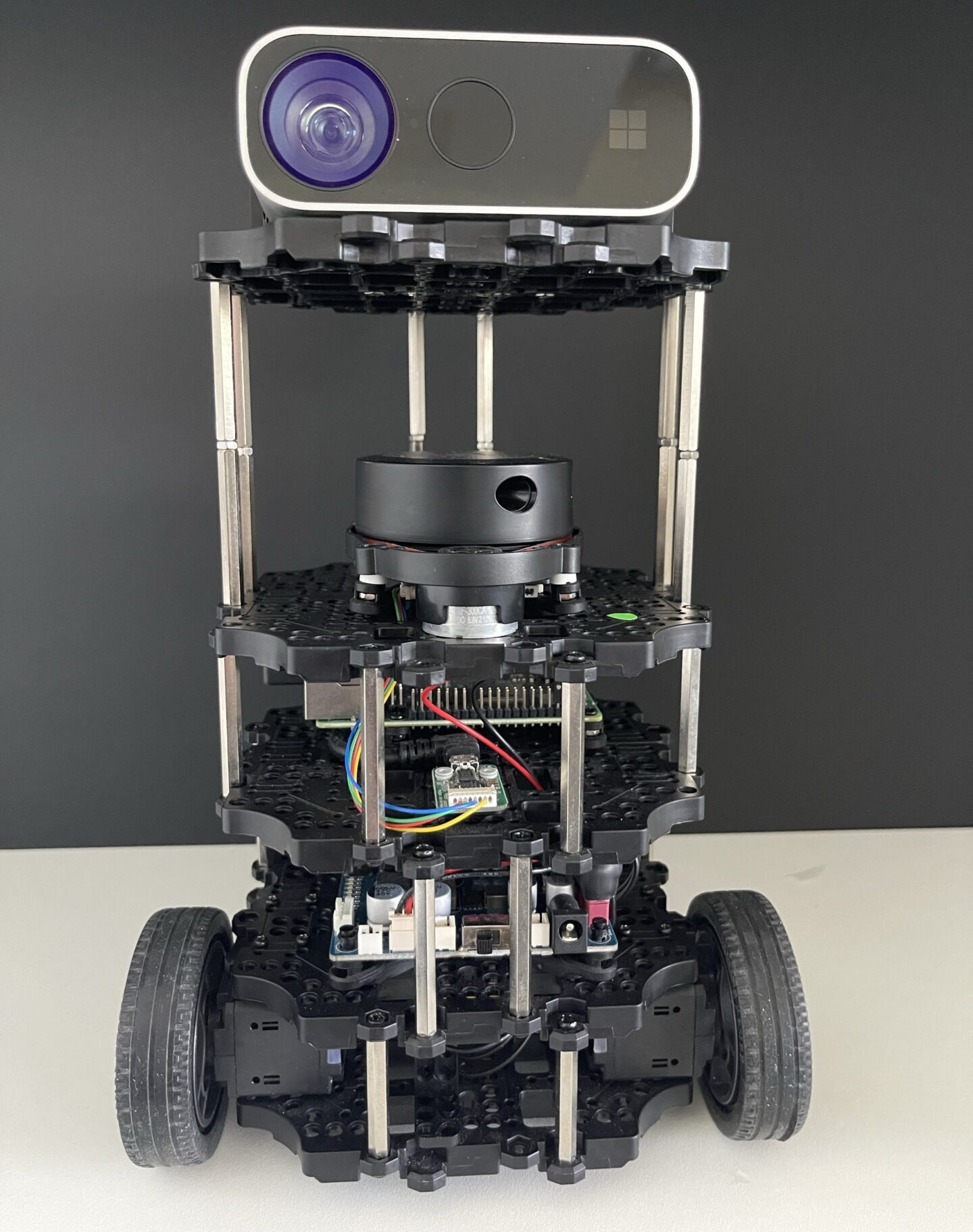

TurtleBot3 burger is a compact, affordable, and customisable ROS-based mobile robot. Key features include laser-based SLAM (simultaneous localisation and mapping) algorithms to build a map, autonomous navigation and person-following (leg movement). It offers expandability through adjustable mechanical and optional parts like sensors and computers. For example, the burger in the image is equipped with a Kinect depth camera. Our fleet is composed of 4 Turtlebot3 burger robots.

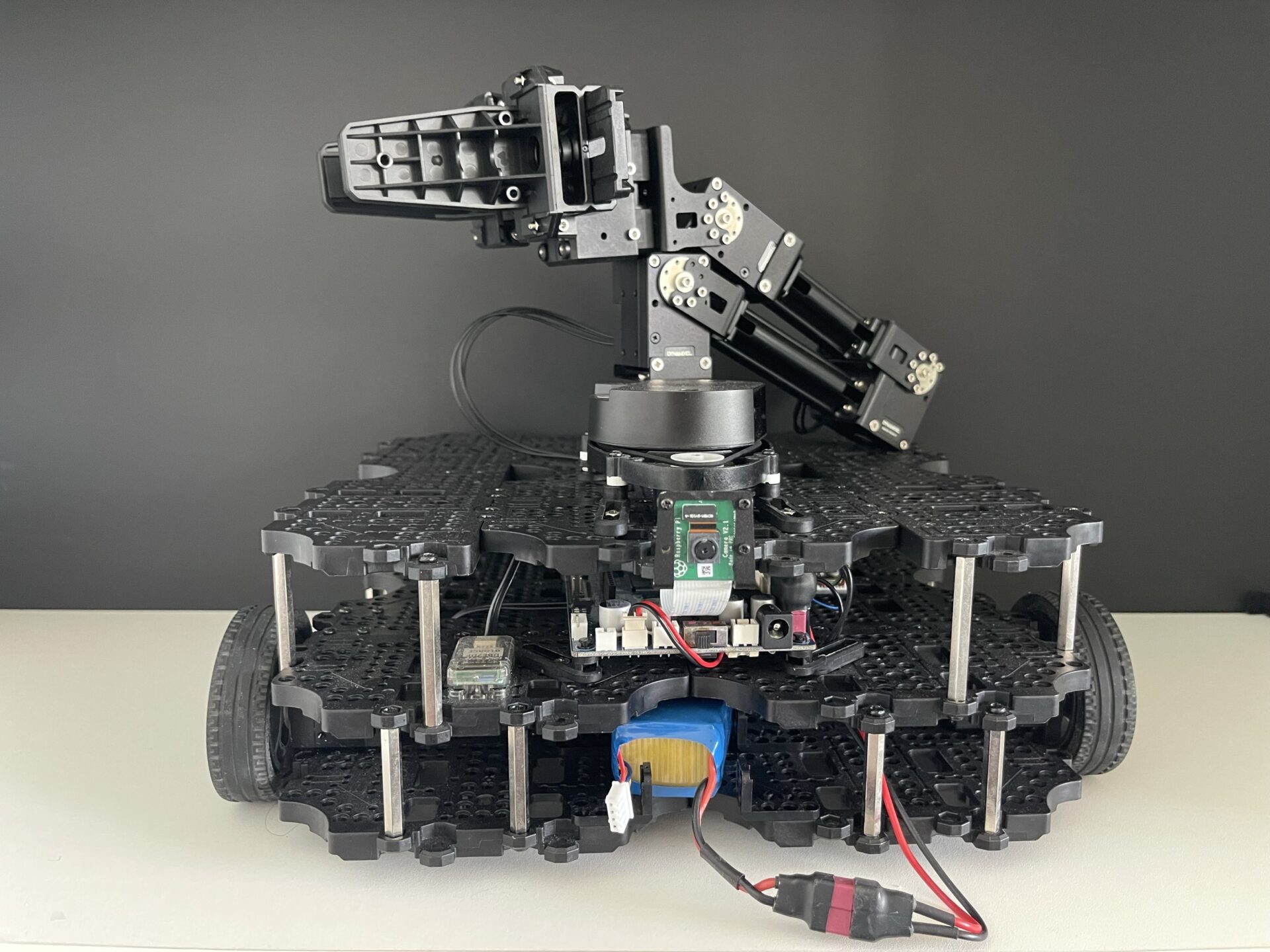

The TurtleBot3 Waffle (Raspberry Pi 4) is a variant of the TurtleBot3 mobile robot, equipped with enhanced features like an RGB-depth camera to support object detection/recognition and feature-based visual SLAM (VSLAM), alongside laser-based SLAM using its 360-degree sensor. Its increased payload capacity enables the integration of an OpenManipulator robotic arm and gripper, enhancing its completeness as a service robot with manipulation functions.